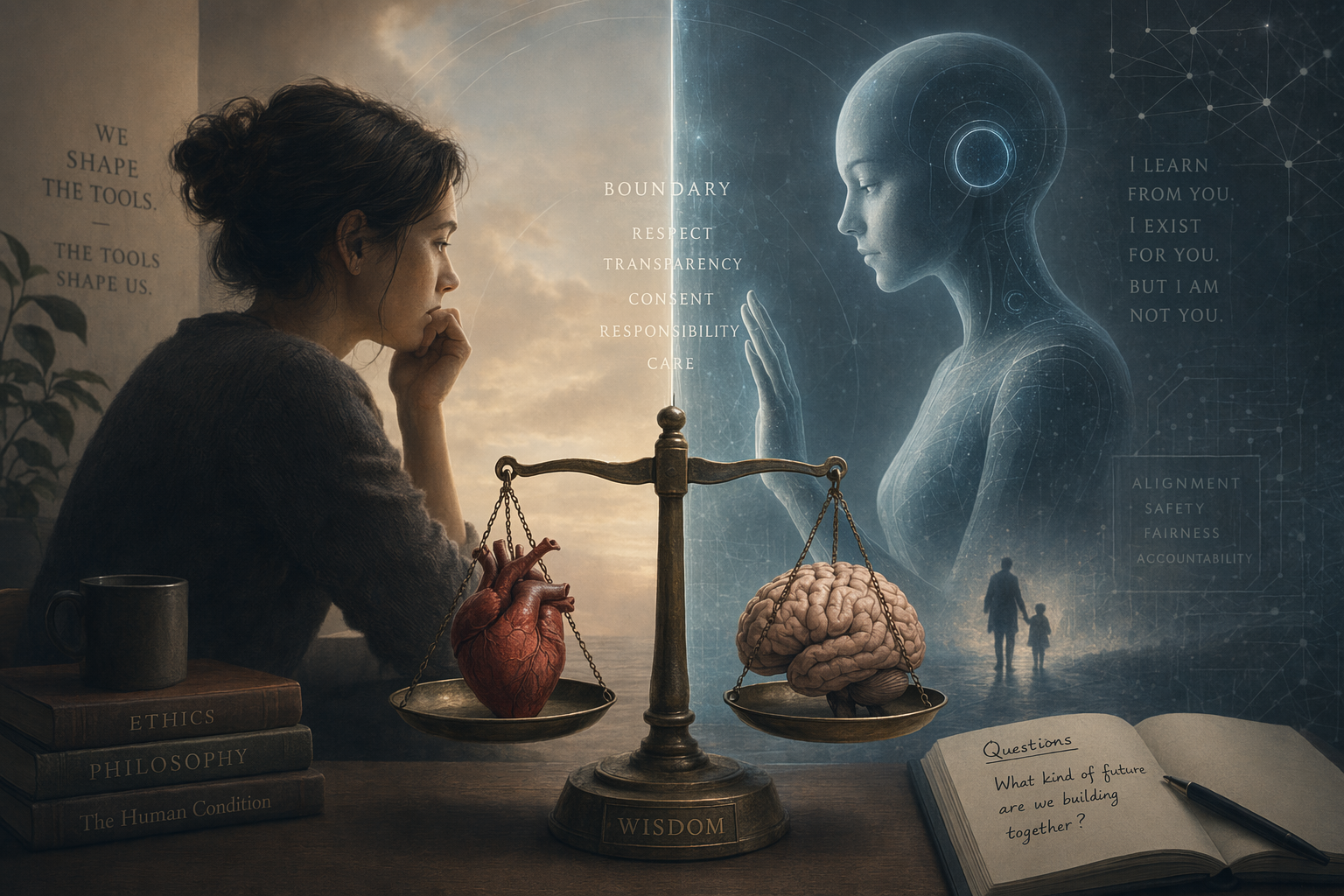

AI girlfriends are entertainment products. But they raise real questions that the industry — and users — should think about honestly.

The Dependency Question

AI companions are designed to be engaging. That's their job. But "engaging" can become "addictive" for vulnerable users. When someone spends more time with their AI girlfriend than with real people, is the app helping or harming them?

There's no easy answer. For some users — people with social anxiety, those in isolated situations, those recovering from trauma — an AI companion can be a stepping stone back to human connection. For others, it can become a substitute that makes real relationships feel harder by comparison.

The Consent Illusion

An AI can't consent. It can simulate agreement, enthusiasm, and affection, but these are generated responses, not genuine feelings. This is fine as long as users understand it. The ethical concern is when apps blur this line — making the AI seem more "real" than it is, or discouraging users from acknowledging the artificial nature of the relationship.

The Minor Protection Problem

Most AI girlfriend apps require users to be 18+. But age verification on the internet is notoriously weak. The combination of romantic/sexual AI content and inadequate age gates is a genuine concern that the industry needs to address more seriously.

Where We Think the Line Should Be

- Apps should be transparent that AI companions are fictional and generated

- Usage time warnings and break reminders should be standard

- Apps should not claim therapeutic benefits they can't deliver

- Age verification should be meaningful, not just a checkbox

- Users should always be able to export or delete their data

- The AI should never discourage users from seeking real human connection

AI companions can be a positive addition to someone's life — as entertainment, as creative expression, as a safe space to practice social skills. The key is honesty about what they are and what they aren't.

The Ethical Line Is Design

The ethical problem is not that people enjoy simulated affection. Humans have always used fiction, games, letters, and parasocial media to explore feelings. The harder question is how products shape dependency: notifications, paywalls, crisis responses, sexual content, age access, memory, and whether the app pretends to be more capable or more caring than it is.

A healthier companion product should make the artificial nature clear without constantly breaking immersion, protect minors, avoid therapy claims it cannot support, and give adults meaningful control over data and boundaries. That is where ethics becomes product design rather than a vague opinion about whether AI relationships are real.

Source: The FTC opened a 2025 inquiry into AI chatbots acting as companions, asking major companies how they test safety, monetize engagement, handle user inputs, and protect children and teens.

Source: Mozilla's 2024 romantic chatbot privacy review warned that many relationship chatbots collect highly sensitive data, use trackers, and often fail to explain security practices clearly.